Questions for you:

- When someone in your organisation says something is “likely” or “probable,” what do they actually mean — a measurable frequency, a personal confidence, or social respectability dressed up as analysis? How can you politely find out?

- Are there situations in your work where “probable” is used to lend authority to what is essentially an opinion? What would change if those assessments had to be expressed as explicit numerical probabilities, and evaluated as part of quarterly reviews?

- How comfortable are you and your colleagues with the idea that some probability estimates are inherently personal — best guesses that should be updated as new information arrives — rather than objective facts?

Organisational applications:

From respectability to arithmetic: the challenge to authority: The story’s historical observation has a direct organisational parallel. Before probability became mathematical, “probable” was the language of experts asserting credibility. A physician’s prognosis was probable because the physician was credible, not because the numbers supported it.

Putting probability on a ledger made it testable: if you say there is a 70% chance of this outcome, that claim can be tested against reality over time. Many organisational probability claims are still operating in the pre-Cardano mode — expressed in language that sounds quantitative but is actually an assertion of credibility. “This project is likely to succeed” said by a senior leader functions as probabilis in the Latin sense, not in the mathematical one. Asking for the number forces a different kind of accountability, reviewing those estimates afterwards doubly so.

The frequentist/Bayesian distinction in practice: The story’s closing distinction between objective and subjective probability matters practically. A frequentist probability — the proportion of times an outcome occurs across many trials — requires data and is in principle verifiable.

A Bayesian probability — a quantified personal belief that updates with new evidence — is inherently subjective but can still be calibrated and tested over time. Most organisational probability estimates are Bayesian, whether or not the people making them realise it. The problem arises when Bayesian estimates are presented as if they were frequentist ones — when “I believe there’s a seventy per cent chance” is communicated as “the probability is seventy per cent.” Making the distinction explicit, particularly in risk and forecasting contexts, tends to lead to more honest communication and more appropriate scepticism among the audience.

Calibration as an organisational discipline: The story notes that probability as a personal belief is not immune to accountability: if you consistently say something has a forty per cent chance and it happens ten per cent of the time, you are wrong, and that wrongness is detectable over time. This is the basis of calibration—the practice of assessing whether your probability estimates match actual frequencies.

Organisations that maintain records of probabilistic forecasts and their outcomes develop better-calibrated estimators over time. Those who make probability claims without tracking them allow systematic overconfidence or underconfidence to persist unchallenged. The simple discipline of writing down probability estimates at the time of a decision and reviewing their accuracy retrospectively is more valuable than most formal forecasting training.

Further reading

On the history of probability and the shift from judgement to arithmetic:

Against the Gods: The Remarkable Story of Risk by Peter L. Bernstein. Bernstein’s history of the development of probability and risk covers the conceptual shift the story describes — from probability as social respectability to probability as mathematical calculation — with the historical figures and their contributions treated in accessible detail.

The Unfinished Game: Pascal, Fermat, and the Seventeenth-Century Letter that Made the World Modern by Keith Devlin. Devlin covers the specific moment when probability became formally mathematical, and explains why the shift was so consequential for how uncertainty could be discussed and challenged.

On the frequentist/Bayesian distinction and its practical implications:

The Theory That Would Not Die: How Bayes’ Rule Cracked the Enigma Code, Hunted Down Russian Submarines, and Emerged Triumphant from Two Centuries of Controversy by Sharon Bertsch McGrayne. McGrayne’s account of the history of Bayesian probability covers the philosophical dispute between frequentist and Bayesian interpretations, with practical examples of where the distinction matters — directly relevant to the story’s closing point.

Superforecasting: The Art and Science of Prediction by Philip Tetlock and Dan Gardner. Tetlock’s research on forecasting calibration is the most practical available account of how subjective probability estimates can be made more accurate through explicit tracking and updating — the applied version of the story’s argument.

On probability language, communication, and the gap between perceived and actual confidence:

Noise: A Flaw in Human Judgement by Daniel Kahneman, Olivier Sibony and Cass R. Sunstein. The chapters on communication and calibration cover the gap between how probability language is understood and how it is intended, with evidence for how much variability results from treating vague qualitative terms as shared quantitative concepts.

Thinking in Bets: Making Smarter Decisions When You Don’t Have All the Facts by Annie Duke. Duke’s framework for treating all claims about uncertain outcomes as implicit probability estimates — and for making those estimates explicit so they can be evaluated and updated — is the organisational application of the story’s argument about putting chance on a ledger.

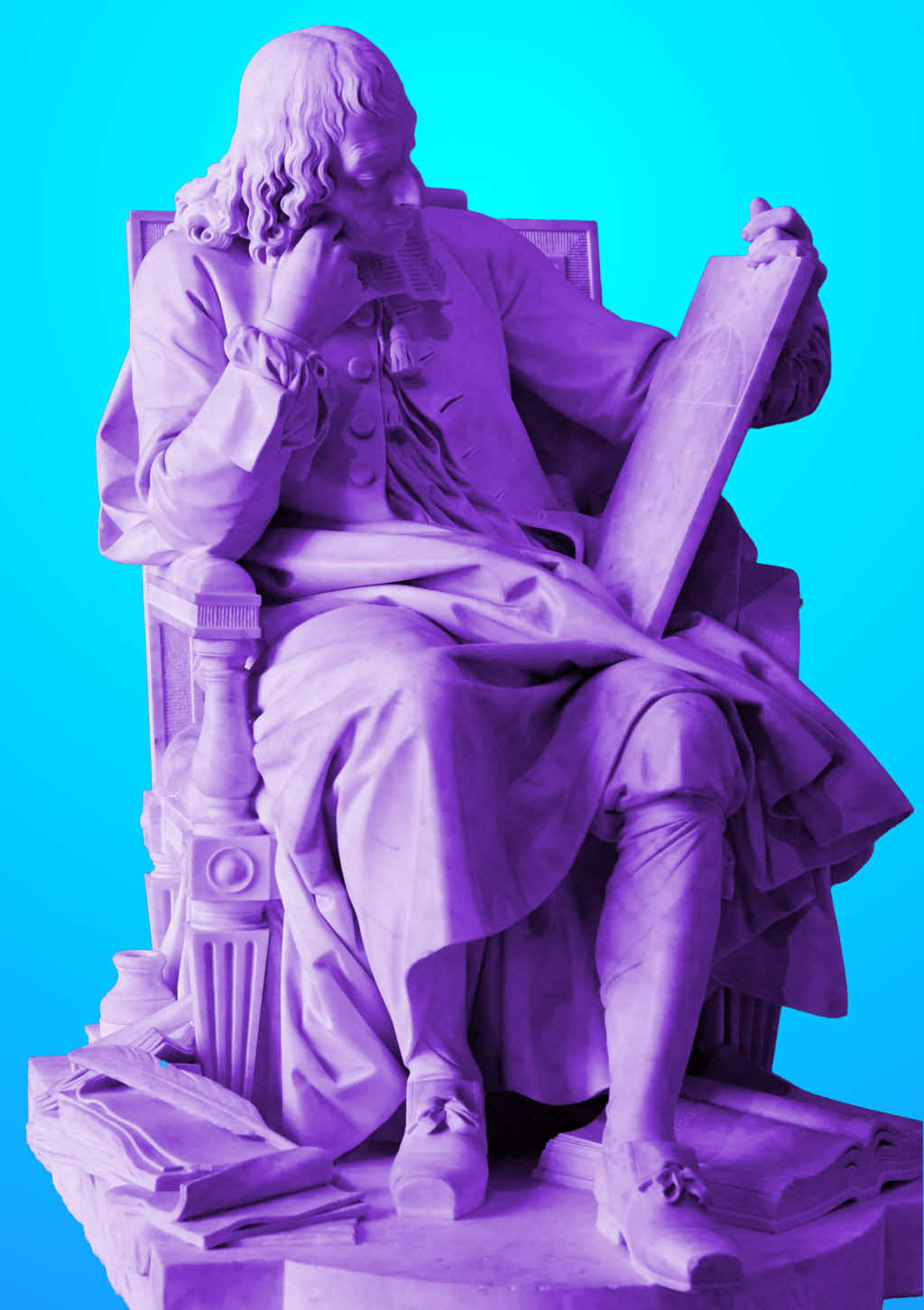

About the image

An image of a statue of Blaise Pascal in The Louvre by Jastrow https://commons.wikimedia.org/wiki/File:Pascal_Pajou_Louvre_RF2981.jpg

Photo montage by Matt Ballantine, 2026