Questions for you:

- When you or someone in your organisation make probability estimates — “this is likely,” “there’s a good chance of,” “we’re fairly confident that” — how often is there an actual enumeration of possible outcomes behind those phrases, and how often is it gut feel dressed up as analysis?

- Can you think of a decision your organisation made recently where properly defining the sample space — listing all genuinely possible outcomes — would have changed the framing?

- Where do you see superstition or ritual substituting for probability thinking in your working environment? How can you change that for the better?

Organisational applications:

Defining the sample space before estimating probabilities: Cardano’s contribution was not sophisticated mathematics but a prior conceptual move: you cannot calculate odds until you have defined all possible outcomes. Most organisational probability estimates skip this step entirely.

A team that says “we think this project is 70% likely to succeed” has typically arrived at that figure through felt confidence rather than by first enumerating what success, partial success, delay, and failure would each look like, and then estimating their relative likelihood. The discipline of forcing explicit sample space definition — what are all the possible outcomes here, not just the ones we are planning for — consistently surfaces scenarios that gut-feel estimates had suppressed. It is unglamorous work, but it is the precondition for any probability estimate meaning anything useful.

The difference between intuition and enumeration in risk assessment: The story notes that before Cardano, people approached chance through gut instinct, pattern-spotting, and superstition. In organisational risk assessment, the same modes persist alongside formal-looking frameworks.

A risk register that lists threats with probability scores, but where those scores were generated by asking a senior leader to rate likelihood from one to five, is closer to intuition than to enumeration. The Cardano test is simple: for any probability estimate, ask whether the person who produced it could describe the sample space from which it was drawn. If they cannot, the number is a dressed-up gut feel rather than a calculation, and should be treated accordingly.

Using sample space thinking to challenge assumed certainty: One of the more useful applications of the sample space idea is running it in reverse: if a plan or forecast treats a particular outcome as near-certain, ask what the full set of possible outcomes actually is, and whether the confident estimate is justified by that set.

Projects that are described as “on track” often have implicit sample spaces that exclude entire categories of failure. Strategic plans that present a single scenario as the expected future have typically defined their sample space as containing only variations on a preferred outcome. Making the enumeration explicit tends to produce more honest probability ranges and more robust contingency planning.

Further reading

On the history of probability and Cardano’s contribution:

Against the Gods: The Remarkable Story of Risk by Peter L. Bernstein. Bernstein covers Cardano’s role in the history of probability in accessible detail, including the significance of the sample space idea and why it took so long for the conceptual tools to develop.

The Unfinished Game: Pascal, Fermat, and the Seventeenth-Century Letter that Made the World Modern by Keith Devlin. Devlin picks up where Cardano left off, covering the Pascal-Fermat correspondence that built probability theory into a formal discipline. A useful complement to the Cardano story.

On probability thinking in practice:

The Drunkard’s Walk: How Randomness Rules Our Lives by Leonard Mlodinow. Mlodinow’s account of how probability intuition fails and how enumeration corrects it covers the same conceptual ground as the story with contemporary examples.

Thinking in Bets: Making Smarter Decisions When You Don’t Have All the Facts by Annie Duke. Duke’s practical framework for treating decisions as probability estimates requires exactly the sample space discipline the story describes — you cannot assign meaningful probabilities without first being honest about what the possible outcomes actually are.

On forecasting, calibration, and the gap between felt confidence and actual accuracy:

Superforecasting: The Art and Science of Prediction by Philip Tetlock and Dan Gardner. Tetlock’s research on what distinguishes accurate forecasters from inaccurate ones is directly relevant: the practices that work — explicit probability estimates, enumeration of alternatives, willingness to update — are the disciplined successors to Cardano’s original insight that chance can be counted.

Noise: A Flaw in Human Judgement by Daniel Kahneman, Olivier Sibony and Cass R. Sunstein. Covers the variability in human probability estimates even among trained professionals, making the case that intuitive probability assessment without structural discipline is less reliable than it appears.

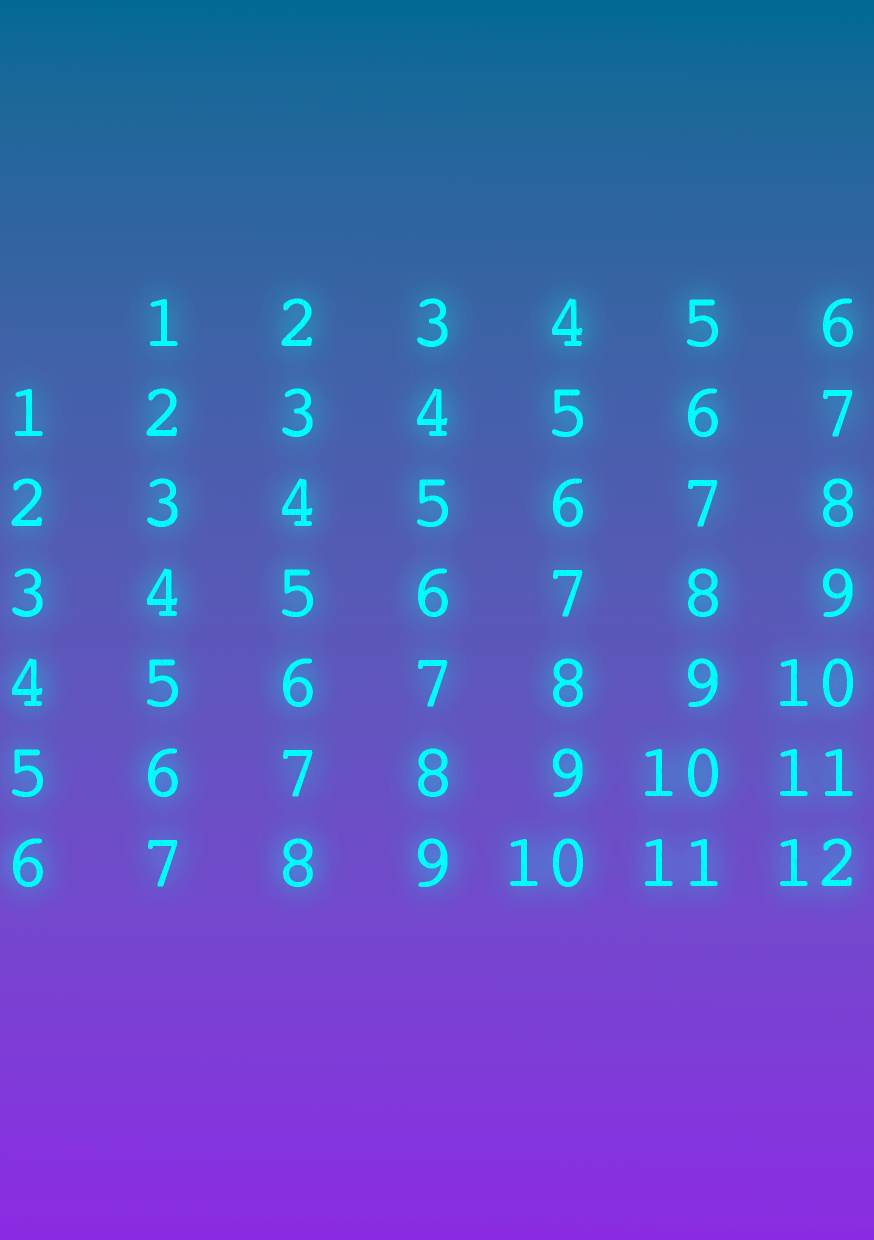

About the image

This was the very last image created for the book. I’d missed it in the earlier passes, and I needed an image quickly before things could be sent to print. You might read that as an excuse…

Photo montage by Matt Ballantine, 2026